Global Trends in Restricting Children’s Access to Social Media

The movement to regulate children’s use of social media has gained unprecedented momentum, spanning continents from Australia to Europe, and extending to Asia and the Americas. Across these regions, governments are enacting age-based restrictions, citing concerns about mental health, online addiction, bullying, and the broader societal impact of early exposure to digital platforms.

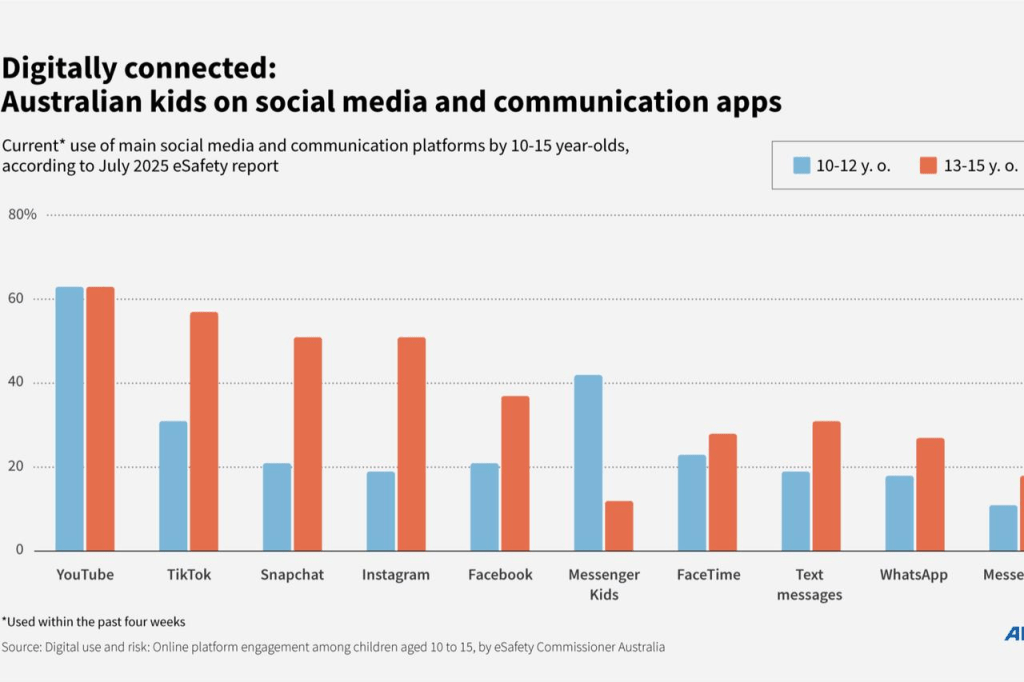

Australia

Australia has positioned itself as the global pioneer in social media regulation for minors. As of December 16, 2025, the country imposed a nationwide ban on social media access for children under 16, encompassing major platforms such as Alphabet’s TikTok and YouTube, and Meta’s Instagram and Facebook. Non-compliant companies face fines up to AUD 49.5 million (USD 34.7 million), illustrating the seriousness of enforcement.

The Australian approach is noteworthy for its comprehensiveness: it does not merely recommend parental oversight but legally restricts access, signaling a shift toward treating social media as a public health issue rather than a private matter. This measure reflects growing evidence linking excessive social media use with anxiety, sleep disruption, and other mental health concerns among adolescents. Critics, however, question the practicality of enforcement, particularly regarding VPN circumvention and account age verification.

Austria

Austria’s conservative three-party coalition announced plans to ban social media for children under 14. The legislative proposal, expected by June 2026, emphasizes age-appropriate digital exposure, with policymakers framing the restriction as essential for child safety and development. Austrian authorities aim to combine prohibitive measures with educational campaigns to increase digital literacy among minors.

While the age threshold is slightly lower than Australia’s, Austria’s approach highlights a balance between protection and gradual autonomy. Yet, the effectiveness of such bans will depend heavily on parental compliance and technological enforcement, which remain persistent challenges.

Brazil

Brazil enacted the Digital Child and Adolescent Law on March 17, 2026, requiring that accounts of minors under 16 be linked to legal guardians. The law also bans addictive platform features such as infinite scrolling, targeting the structural design of social media that fosters excessive engagement.

This legislation reflects a broader awareness in Latin America of how platform design influences behavior. Brazil’s model focuses not only on access restrictions but also on modifying the underlying features that create dependency. Enforcement, however, will rely on both the digital literacy of parents and the technological responsiveness of platforms, which historically has been inconsistent in the region.

United Kingdom

In the UK, the government is evaluating an approach similar to Australia’s. Technology Minister Liz Kendall indicated in February 2026 that restrictions for under-16 users could be paired with stricter regulations for AI chatbots and online services. Notably, the UK is conducting pilot tests in 300 households to observe how restrictions on social media, screen time limits, and curfews affect sleep, family interaction, and academic performance.

This experimental approach reflects a research-driven model of policymaking, emphasizing measurable outcomes before full-scale legislation. While promising, it also raises questions about scalability and the diversity of home environments, which can significantly influence results.

China

China’s regulatory framework diverges from outright bans. The government introduced a “minors’ mode” requiring device-level restrictions and platform-specific rules that limit screen time according to age. While the minimum access age is 13, compliance is reinforced technologically rather than legally, reflecting China’s approach to digital governance: state-directed, technologically enforceable, and centrally monitored.

Although effective in controlling usage, China’s model has drawn criticism for limiting autonomy and parental discretion, and for embedding extensive state surveillance into everyday digital interactions.

Denmark

Denmark plans to ban social media for children under 15, with parental permission allowing access for children aged 13 to 15. This hybrid approach acknowledges the importance of parental oversight while establishing a clear legal minimum age. The policy aligns with Denmark’s broader social welfare philosophy, emphasizing child protection and the prevention of early exposure to potentially harmful online content.

France

France has taken a proactive legislative approach by prohibiting children under 15 from using social media, citing increasing concerns about online bullying and mental health risks. The National Assembly approved the draft law in January 2026, but it must still pass through the Senate before final ratification.

The French model emphasizes both legal restriction and societal awareness. By setting the age at 15, France aligns with studies suggesting that mid-adolescence is a critical period for mental and emotional development. Critics, however, argue that enforcement will be challenging. Social media use is pervasive, and children often find ways to circumvent age restrictions, such as falsifying birthdates or using siblings’ accounts. Nevertheless, France’s legislative clarity provides a strong precedent for neighboring European countries considering similar measures.

Germany

In Germany, children aged 13 to 16 can only access social media with parental consent. Although this approach is less prohibitive than France’s, child protection advocates argue that parental controls and consent mechanisms are frequently insufficient. The German system relies heavily on parents actively monitoring and managing their children’s accounts, yet many minors remain on social media platforms without proper oversight.

Germany’s approach reflects a compromise between autonomy and protection. The lower age threshold acknowledges that early adolescence is a period when children benefit from social interactions online, while still attempting to impose a protective framework. The main challenge lies in enforcement, as German authorities do not possess mechanisms to systematically verify parental consent across all platforms.

Greece

Greece is on the verge of introducing a ban on social media for children under 15. According to Reuters sources on February 3, 2026, the government is “very close” to implementing the restriction, reflecting a growing trend across Southern Europe.

While details are still emerging, the Greek policy appears to be influenced by EU guidelines and the experiences of neighboring countries. The proposed ban seeks to balance protection with parental involvement, though the enforcement mechanisms and penalties for non-compliant platforms remain unclear. Greece’s forthcoming regulation is particularly significant in the context of regional harmonization of child digital safety laws, as it could serve as a model for other Mediterranean nations facing similar challenges.

India

In India, the state of Karnataka became the first to ban social media access for children under 16 as of March 6, 2026. Other states, including Goa and Andhra Pradesh, are evaluating similar restrictions. This regional approach reflects India’s federal structure, where individual states can legislate on digital safety while the central government provides overarching guidance.

The policy was prompted by concerns from India’s chief economic advisor regarding the “predatory” nature of social media platforms, which retain children online for extended periods, exploiting engagement-driven algorithms. While Karnataka’s ban focuses on protecting mental health and preventing addiction, its success will depend on robust enforcement and the capacity of state authorities to monitor compliance. Neighboring states observing Karnataka’s experience will likely adapt their policies, potentially leading to a patchwork of regulations until a national standard is established.

Indonesia

Indonesia’s Ministry of Communication and Digital Affairs announced on March 6, 2026, that children under 16 would face restrictions on social media use. Starting March 28, 2026, “high-risk platforms,” including TikTok, Facebook, Instagram, and Roblox, will gradually deactivate accounts belonging to minors under 16.

The Indonesian approach is technologically oriented, using phased enforcement to minimize disruption and allow families to adjust. Minister Meutya Hafid highlighted the risks of addictive content and exposure to inappropriate material. By focusing on platform-level compliance and technological deactivation, Indonesia aims to create an enforceable model while preserving parental oversight. Critics, however, warn that children may circumvent restrictions using alternative accounts or VPNs.

Italy

Italy requires parental consent for children under 14 to create social media accounts. Users above this age can register independently without restriction. Italy’s approach reflects a compromise between protecting younger adolescents and allowing older teenagers to engage with digital platforms.

While less restrictive than other European countries, Italy emphasizes parental involvement, highlighting the importance of guidance rather than absolute bans. The system also demonstrates the challenges of balancing protection with digital autonomy, especially as 14-year-olds increasingly navigate complex online environments.

Malaysia

Malaysia announced in November 2025 that it would prohibit social media access for children under 16 starting in 2026. The Malaysian policy aligns with a global trend recognizing the potential harms of early exposure to social media, including mental health risks, cyberbullying, and online addiction.

The country’s strategy emphasizes both legal restriction and public education campaigns. Enforcement mechanisms are expected to include mandatory age verification, though the effectiveness will hinge on platform cooperation and technological implementation. Malaysia’s policy reflects a broader Southeast Asian concern about children’s digital well-being, influenced by both local social norms and international examples such as Australia.

Norway

Norway has proposed raising the minimum age for accepting social media terms from 13 to 15, while still allowing parents to provide consent for younger children. Additionally, the government is working on legislation that would set an absolute minimum age of 15 for social media access.

Norway’s model emphasizes a balance between legal restriction and parental involvement, reflecting the country’s child-centric social policies. By combining a legal age limit with parental oversight, Norway attempts to protect minors from early exposure to potentially harmful online content, while still acknowledging the role of parental guidance. Critics, however, note that enforcement is challenging and relies heavily on both platform compliance and parental monitoring.

Poland

In Poland, the ruling party announced on February 27, 2026, a new draft law banning social media for children under 15. Platforms would be held accountable for implementing robust age verification mechanisms.

Poland’s approach represents a proactive legislative effort to shift responsibility to social media companies, ensuring that platforms cannot passively allow underage users. While promising in concept, child protection advocates remain skeptical about technical enforcement and the ability of companies to prevent falsified accounts. Nevertheless, the proposed legislation signals a clear commitment to prioritize children’s safety over platform convenience or engagement metrics.

Portugal

Portugal’s parliament approved a draft law on February 12, 2026, requiring explicit parental consent for children aged 13 to 16 to access social media. Companies that fail to implement these measures could face fines of up to 2% of their global revenue.

This policy illustrates a strong regulatory stance within the EU, linking legal obligations to significant financial penalties. Portugal’s emphasis on parental consent ensures that guardians are directly responsible for their children’s digital activity, while also holding companies accountable for compliance. The approach sets a precedent for the integration of accountability and enforcement mechanisms at a continental level.

Slovenia

Slovenia is preparing legislation to ban social media for children under 15. The announcement on February 6, 2026, by Deputy Prime Minister Matej Arcon highlights a coordinated effort to harmonize child protection policies with EU standards.

The Slovenian approach reflects regional trends in Southern and Central Europe, emphasizing legal restriction while seeking to maintain parental involvement. Effective enforcement remains a challenge, but the legislative clarity provides a framework for monitoring and penalizing non-compliant platforms.

Spain

Spain has committed to banning social media for children under 16, with mandatory age verification systems to be implemented by platforms. The proposed ban, however, must navigate the country’s complex parliamentary structure before enactment.

Spain’s policy aligns with the broader EU movement toward standardizing child protection in digital spaces. By combining a clear age threshold with technological verification, the government seeks to ensure both legal compliance and practical enforcement. Nonetheless, the fragmented nature of Spain’s governance may complicate nationwide implementation.

United States

The United States relies primarily on the Children’s Online Privacy Protection Act (COPPA), which prevents companies from collecting personal data from children under 13 without parental consent. Various states have enacted additional laws requiring parental permission for social media access. However, many of these initiatives have faced legal challenges on freedom-of-expression grounds.

Unlike most European and Asian countries, the U.S. does not enforce blanket age bans. Instead, it emphasizes data protection and parental oversight. While this approach preserves individual choice, it leaves significant gaps in safeguarding children from addictive content and exposure to harmful online interactions.

Analysis and Observations

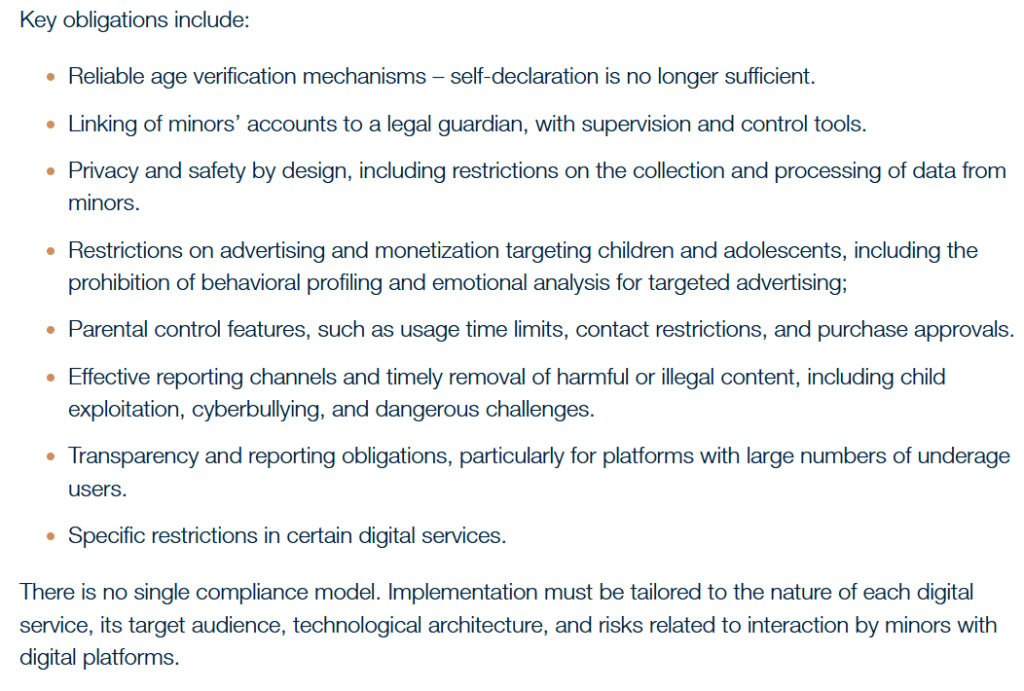

Across continents, the trend is clear: governments are increasingly treating social media as a public health and safety concern. Approaches differ, from Australia’s strict nationwide ban for under-16s to the U.S.’s focus on privacy and parental consent. European countries generally favor age thresholds between 13 and 16, often complemented by parental oversight or mandatory platform verification. Asian countries like China and Indonesia focus on technological enforcement, whereas Latin American countries such as Brazil combine account linkage with design changes to reduce addiction risks.

Despite these efforts, enforcement challenges persist. VPN circumvention, falsified accounts, and inconsistent parental oversight can undermine legal measures. Yet, the growing global consensus indicates a shared recognition: early exposure to social media carries real risks to mental health, academic performance, and family life, and legal frameworks must evolve to mitigate these harms.

Looking at the global landscape, it is clear that the regulation of children’s access to social media has moved from being a niche concern to a central issue of public policy. From Australia, which has imposed the world’s strictest ban for under-16s, to European countries like France, Portugal, and Spain implementing age thresholds with parental consent and mandatory verification, there is an unmistakable trend toward legal intervention. In Asia, China and Indonesia are leveraging technological enforcement to limit screen time and access, while in India and Brazil, regional and national frameworks are beginning to address both account control and platform design features that promote addictive behaviors. Even in the United States, where the emphasis remains on privacy through COPPA and parental consent, the conversation around minors’ safety is growing louder, highlighting a shared global concern: children’s mental health, sleep patterns, family interactions, and overall well-being are increasingly at risk in the digital space.

Across these diverse approaches, certain patterns emerge. Countries that combine clear legal age limits with active parental involvement and platform accountability tend to create more enforceable frameworks. Conversely, models that rely solely on parental oversight, like Germany and the U.S., face persistent challenges in practical compliance. Technological measures, such as those implemented in China and Indonesia, offer greater enforceability but raise questions about autonomy and privacy. Meanwhile, legislation in countries like Brazil and Portugal is particularly innovative in addressing platform design and linking corporate accountability directly to compliance, signaling a new era of proactive regulation.

From my perspective, this global shift reflects a recognition that social media is not merely a tool for communication or entertainment; it is a powerful environment that can shape the cognitive, emotional, and social development of children. Governments are no longer content to leave regulation to voluntary corporate policies or parental discretion alone. While challenges remain—such as circumvention, enforcement, and potential pushback from technology companies—the trajectory is clear: protecting minors online requires coordinated legal frameworks, technological solutions, and public education. Ultimately, I see these developments as a critical step toward balancing digital freedom with child protection, and they underscore the urgency of global cooperation to create safer online spaces for the next generation.

Av. Bilge Kaan ÖZKAN 'ın kaleminden.. sitesinden daha fazla şey keşfedin

Son gönderilerin e-postanıza gönderilmesi için abone olun.