Introduction & Framing: ”Protecting Children” in the ‘Digital Space’ as a Regulatory Imperative..

In March 2026, the European Union escalated enforcement under its Digital Services Act (DSA) by launching formal investigations into the widely used social media platform Snapchat, as well as four major adult content websites..

— On the basis that these services fail to implement effective measures to protect minors online. This regulatory action reflects an increasingly urgent policy objective within the EU: to establish robust, enforceable safeguards that prevent children from accessing harmful digital environments or being exposed to predatory behavior with systemic consequences for their physical and psychological welfare.

What distinguishes this moment in digital governance is the EU’s investment in a proactive, preventive regulatory stance that refuses to treat platform self‑regulation as sufficient absent demonstrable protective mechanisms for vulnerable users.

The regulatory architecture underpinning this intervention is the Digital Services Act (Regulation (EU) 2022/2065),

which sets forth obligations for all intermediaries providing digital services within the European Union. The DSA imposes graduated responsibilities — from content moderation to age assurance — and more stringent duties on entities designated as Very Large Online Platforms (VLOPs) due to their reach and capacity to influence user behavior.

Platforms with tens of millions of European users are required to conduct systemic risk assessments, adopt effective mitigation strategies, and demonstrate that age verification systems are not merely formalistic but capable of denying access to underage users to age‑restricted areas of the internet.

At a strategic level, the EU’s decision to question the sufficiency of platforms’ compliance signals a recalibration of digital governance toward safeguarding fundamental rights, with child protection as a core component rather than an ancillary concern. This regulatory priority aligns with broader international efforts — including UN policy initiatives and OECD reporting — that emphasize the need for digital safety and the elimination of exploitative mechanisms targeting youth. This moment marks a shift away from laissez‑faire digital markets toward a model in which democratic societies exert regulatory authority to shape safe digital public spheres.

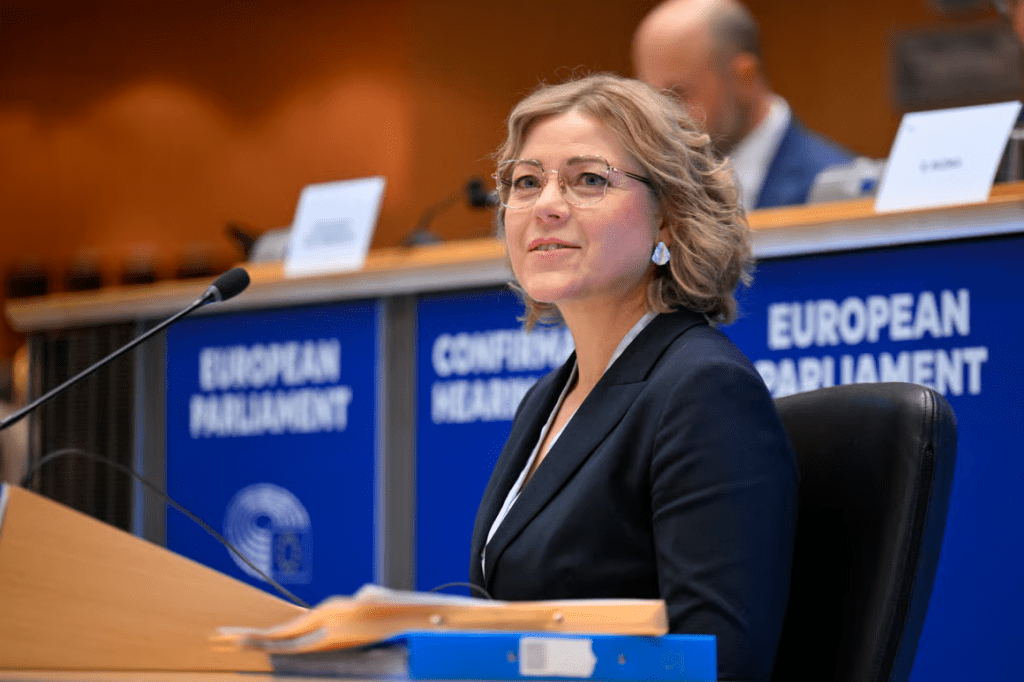

Henna Virkkunen; the European Commission’s Executive Vice‑President for Tech Sovereignty, Security, and Democracy, has articulated a position that underscores the EU’s normative commitment to child safety online. In her public statements announcing the inquiries, she emphasized that children are accessing adult content at increasingly younger ages and that platforms must adopt “robust, privacy‑preserving and effective measures” to uphold minors’ safety.

By foregrounding both the ethical imperative and the legal obligations embedded in the DSA, Virkkunen positions regulatory oversight not as punitive, but as an essential counterbalance to digital harms that have transnational and long‑term developmental implications for children.

This paper advances the argument in alignment with Virkkunen’s expressed view: that regulatory authorities are justified in taking decisive action to hold large digital service providers accountable for systemic protection failures, and that such intervention is both legally mandated under the DSA and morally necessary in a digital age where the risks to minors’ well‑being are pervasive and profound. To support this position, the subsequent sections will:

- Analyze the legal framework of the Digital Services Act as it pertains to age verification and child safety obligations,

- Examine the specific shortcomings in current platforms’ compliance logic that triggered the investigations,

- Explore the broader social and psychological risks associated with unregulated access to harmful content by minors,

- Situate the EU’s approach within comparative international regulatory trends, and

- Articulate policy recommendations consistent with both legal standards and child rights principles.

Legal Framework of the ‘Digital Services Act’ and Child Protection Duties

The Digital Services Act (DSA), formally enacted in 2022 and progressively enforced since 2023, constitutes a cornerstone of the European Union’s digital regulatory architecture. Its primary aim is to create a harmonized legal environment for online services while simultaneously protecting fundamental rights, particularly the rights of vulnerable populations such as children. Unlike prior legislation that relied heavily on self-regulation by platforms, the DSA establishes binding obligations with clear enforcement mechanisms, including the potential for substantial financial penalties in cases of non-compliance.

1. Obligations for very large online platforms (VLOPs)

Some(5,specifically named) online platforms with user bases exceeding 45 million EU citizens are classified as Very Large Online Platforms (VLOPs). The Digital Services Act (DSA) requires these platforms to:

- Conduct annual systemic risk assessments that identify potential harms to users, including minors.

- Implement effective mitigation measures proportional to the identified risks, including content filtering, moderation, and algorithmic adjustments that reduce exposure to harmful material..

- Establish robust age verification mechanisms, ensuring that underage users cannot access adult content or interact with material that poses psychological or developmental risks.

- Maintain transparent reporting and documentation of compliance, enabling regulatory authorities to assess both procedural and substantive adherence to legal requirements.

These obligations are; non-discretionary.. Failure to implement them adequately exposes platforms to penalties of up to 6% of annual global turnover, a significant leverage tool designed to compel compliance. (digital-strategy.ec.europa.eu)

2. Age Verification and Child Safety Provisions

Central to the current EU investigations is the effectiveness of age verification systems. The DSA requires that platforms employ technologies capable of reliably confirming a user’s age without unnecessarily compromising privacy. Methods may include third-party verification services, AI-driven recognition combined with human oversight, or other technically sound mechanisms that prevent minors from accessing adult content.

The AP News article emphasizes that platforms under investigation have demonstrated deficiencies in this area, suggesting that existing verification protocols are insufficiently rigorous or easily circumvented. In Henna Virkkunen’s perspective, this represents a direct threat to child safety that warrants regulatory intervention — not merely advisory guidance or post-facto remediation. Her position underscores the principle that preventive compliance is superior to reactive enforcement, particularly when the users in question are minors.

3. Content Moderation and Transparency Duties

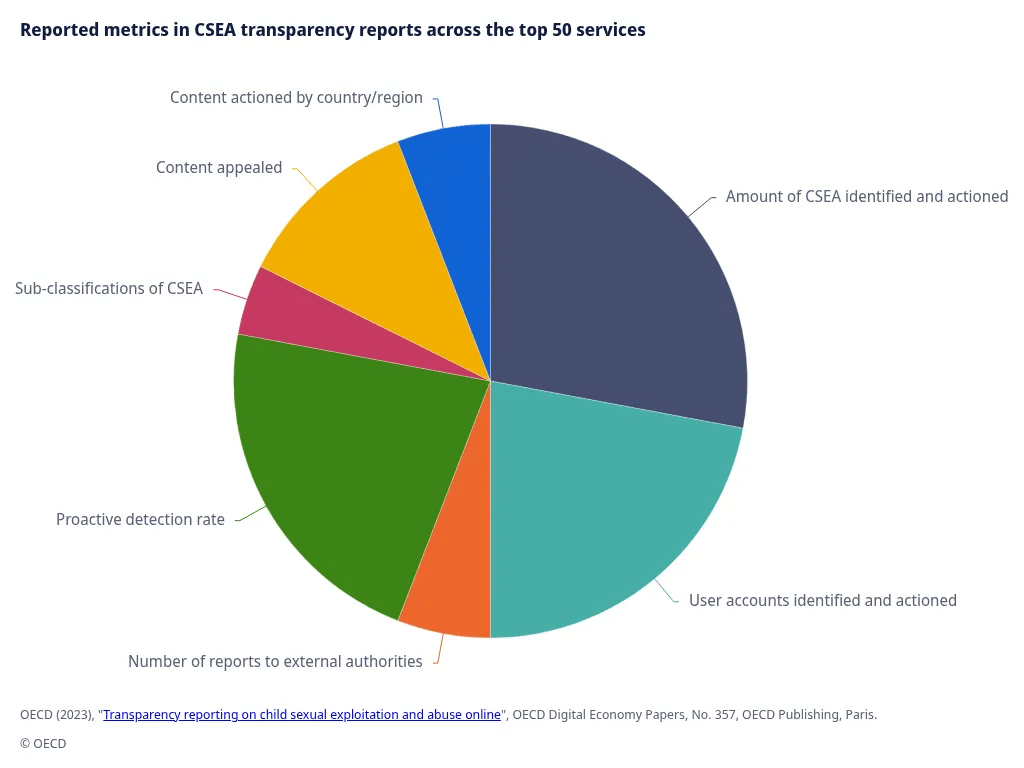

Beyond age verification, the DSA imposes obligations regarding content moderation. Platforms must:

- Proactively detect illegal content, including sexual exploitation or predatory interactions involving minors.

- Ensure moderation processes are transparent, auditable, and accountable, allowing regulators to verify that platforms are not merely applying superficial or token measures.

- Provide mechanisms for reporting unsafe content and for timely removal of harmful material, with clear escalation procedures for high-risk cases.

These rules integrate child protection directly into operational governance frameworks, ensuring that platforms cannot claim ignorance of exposure risks. As Virkkunen has consistently argued, platforms have an affirmative duty to prevent foreseeable harm to children, and the DSA codifies this responsibility in enforceable terms.

4. Enforcement and Regulatory Oversight

The EU’s investigations into Snapchat and adult platforms exemplify the operationalization of the DSA’s enforcement mechanisms. By opening formal inquiries, the Commission exercises its authority to:

- Request detailed compliance documentation and systemic risk assessments.

- Evaluate the adequacy of age verification technologies and moderation systems.

- Impose interim measures or, if non-compliance persists, substantial fines to ensure corrective action.

The legal rationale aligns with Henna Virkkunen’s philosophy: regulators must act decisively when platforms fail to protect minors, as passive oversight would otherwise permit harm to proliferate across digital environments. This enforcement paradigm illustrates the EU’s commitment to preventive, principle-driven regulation rather than relying solely on reactive, incident-based responses.

Analysis of Platform Deficiencies and Implications for Child Safety

The EU’s March 2026 inquiries into Snapchat and four adult content platforms reveal systematic deficiencies in compliance with child protection obligations under the Digital Services Act. While platforms often emphasize innovation, user engagement, and privacy considerations, these priorities cannot supersede the fundamental right of minors to a safe digital environment.

From a legal and ethical standpoint, these failures can be categorized into three primary areas: age verification inadequacy, content moderation lapses, and transparency shortcomings.

1. Age Verification Inadequacy

The AP News reporting highlights that Snapchat and adult platforms rely on self-declared age or minimally intrusive verification methods, which are easily circumvented. Such mechanisms fail to ensure that children are effectively excluded from age-restricted content.

- Technical Limitations: Platforms often employ soft barriers such as “click-to-verify” prompts, which assume good faith from users and fail to account for minors’ capacity to bypass restrictions.

- Consequential Risks: Inadequate age verification exposes children to sexual content, online grooming, and predatory interactions, which can result in both immediate psychological harm and long-term developmental trauma.

- Regulatory Gap: The DSA mandates robust, demonstrably effective age verification systems. Current methods on these platforms fall short of compliance standards, justifying regulatory scrutiny.

Henna Virkkunen has consistently stressed that age verification must be technology-enabled yet privacy-preserving, striking a balance between user data protection and safeguarding minors. Her stance emphasizes that failure to meet this threshold constitutes a breach of fundamental responsibilities, necessitating decisive regulatory intervention.

2. Content Moderation Lapses

Beyond age verification, the platforms under investigation exhibit systemic deficiencies in content moderation:

- Automated Filtering Weaknesses: Algorithmic detection mechanisms fail to flag substantial volumes of illegal or harmful content, particularly in nuanced scenarios where sexualized material is masked or misclassified.

- Insufficient Human Oversight: Reliance on automated systems without robust human review increases the likelihood of harmful content reaching minors.

- Lack of Escalation Protocols: Reports of abusive or exploitative material often remain unresolved, demonstrating inadequate internal governance structures.

Such lapses undermine the preventive intent of the DSA and expose children to high-risk digital environments. Virkkunen’s perspective underlines that platforms cannot claim technical impossibility as justification; the ethical and legal responsibility to protect minors is non-delegable. Regulatory authorities must therefore act to compel meaningful remediation.

3. Transparency and Accountability Deficiencies

Transparency obligations are integral to ensuring enforceable accountability. Platforms must provide regulators with clear, auditable evidence of compliance, including:

- Risk assessments and mitigation strategies,

- Age verification protocols,

- Content moderation policies and removal statistics.

The investigations cited by AP News suggest that Snapchat and the adult platforms fail to provide adequate documentation and transparency, preventing regulators from confidently evaluating their compliance. This opacity not only violates DSA mandates but also hinders public trust and undermines the EU’s broader objective of digital safety for children.

4. Implications for Child Safety and Societal Harm

The consequences of these deficiencies are profound:

- Psychological Impact: Exposure to sexually explicit material at an early age can contribute to anxiety, distorted perceptions of sexuality, and behavioral issues.

- Exploitation Risk: Inadequate safeguards leave children vulnerable to grooming and exploitation by malicious actors.

- Legal and Ethical Liability: Failure of platforms to protect minors invites regulatory sanctions and diminishes corporate social responsibility credibility.

Henna Virkkunen’s insistence on preventive regulation reflects an understanding that harm in digital spaces manifests quickly and pervasively, requiring preemptive measures rather than reactive enforcement. Her alignment with a child-first regulatory philosophy ensures that the best interests of minors take precedence over commercial priorities.

Comparative Perspective and International Implications

The European Union’s decisive enforcement under the Digital Services Act (DSA) is not occurring in isolation. Globally, governments and international bodies are grappling with similar challenges: balancing digital innovation with the protection of minors and broader human rights. By comparing the EU approach to frameworks in other jurisdictions, one can appreciate the distinctiveness and potential influence of Henna Virkkunen’s regulatory philosophy.

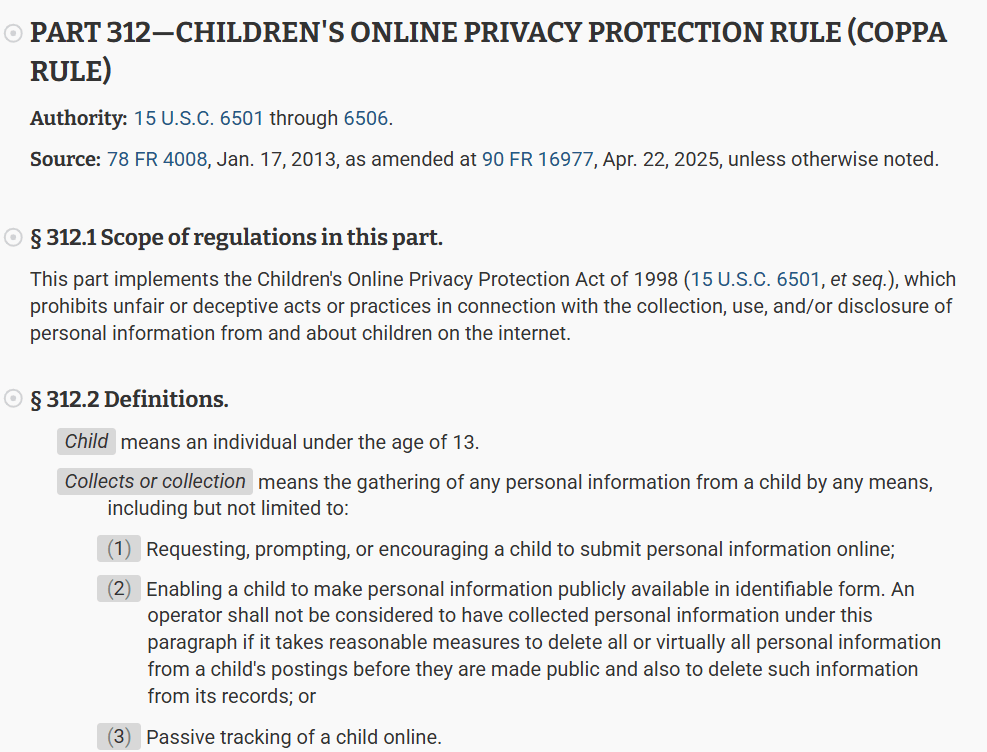

1. In The United States

In the U.S., child safety online is primarily governed by the Children’s Online Privacy Protection Act (COPPA),

which mandates parental consent for collection of data from children under 13 and imposes restrictions on targeted advertising. However, COPPA does not extend to comprehensive content moderation obligations in adult-oriented platforms. This creates a regulatory gap: minors may still access harmful content even if their personal data is protected.

In contrast, the EU’s DSA directly addresses exposure to harmful content, establishing obligations that prevent children from accessing inappropriate material, rather than merely regulating data collection. Henna Virkkunen’s emphasis on preventive, multi-layered protections demonstrates a regulatory ambition that surpasses the U.S. model in terms of proactive child safety enforcement.

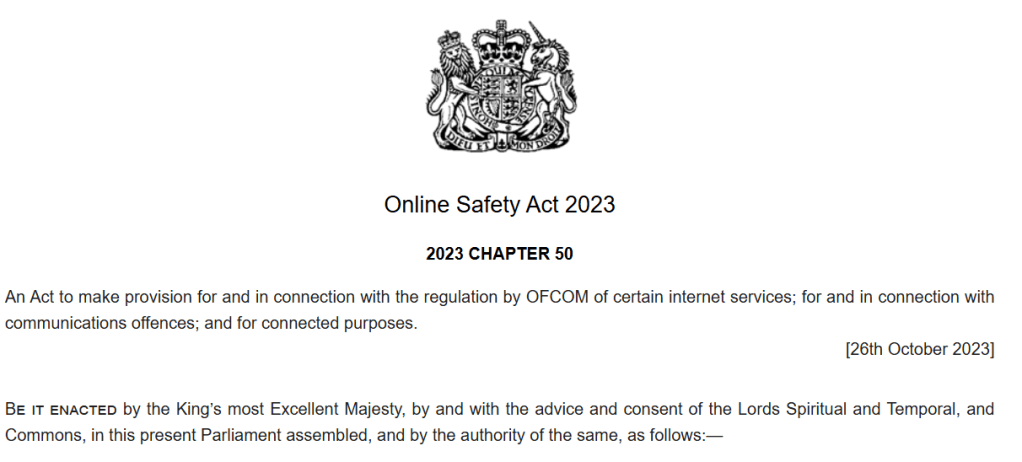

2. United Kingdom

Post-Brexit, the United Kingdom introduced the Online Safety Act (OSA),

which mirrors some elements of the DSA by imposing duties on platforms to remove harmful content, including sexual exploitation and abuse material. The OSA also incorporates age verification and transparency requirements, though enforcement remains in early stages.

The EU’s DSA approach, particularly under scrutiny of Snapchat and adult platforms, exemplifies stronger integration of preventive accountability, demonstrating that regulators can require both technical efficacy and operational transparency, a combination Henna Virkkunen has highlighted as essential to child protection.

3. International standards and multilateral initiatives..

The United Nations Convention on the Rights of the Child (UNCRC) emphasizes the right of children to be protected from all forms of exploitation and harmful content. OECD and Council of Europe guidelines also promote digital literacy, online safety education, and platform responsibility.

The EU’s investigations, therefore, represent an alignment with international child rights standards, operationalizing abstract principles into legally enforceable obligations. Platforms operating across borders are compelled to meet the EU’s standards regardless of domestic regulations in other jurisdictions. This sets a precedent for global digital governance, potentially influencing multinational platforms to adopt EU-compliant safety measures worldwide.

4. Implications for multinational platforms

The EU’s proactive enforcement carries substantive implications for international digital service providers:

- Harmonization Pressure: Global platforms must align with EU child safety obligations to maintain access to the European market.

- Compliance by Design: Henna Virkkunen’s philosophy encourages integrating protective measures into core platform design, rather than retrofitting safeguards after regulatory intervention.

- Reputational Considerations: Non-compliance not only risks financial penalties but also undermines public trust and corporate social responsibility credibility on a global scale.

By situating the EU’s approach within a comparative framework, it becomes clear that Henna Virkkunen advocates for a regulatory model that is both legally rigorous and globally influential, promoting systemic protection of minors in digital environments.

Policy Recommendations and ‘Ethical Imperatives’..

The EU’s regulatory intervention under the Digital Services Act (DSA) exemplifies a proactive, rights-based approach to safeguarding children in digital environments. The March 2026 investigations into Snapchat and major adult content platforms illuminate the gaps in current compliance mechanisms and provide an opportunity to articulate concrete recommendations for both regulatory authorities and platforms. These recommendations reflect a philosophy consistent with Henna Virkkunen’s vision: that child safety online is non-negotiable, technologically feasible, and ethically imperative.

1. Recommendations for Platforms

a. Implement Robust Age Verification Systems:

Platforms must adopt age verification mechanisms that are technically reliable, privacy-respecting, and resistant to circumvention. This may include third-party verification services, digital ID integration, or a combination of automated and human oversight. Henna Virkkunen emphasizes that prevention is more effective than remediation, making age verification the first line of defense.

b. Strengthen Content Moderation Frameworks:

Platforms should integrate multi-layered content moderation, combining AI-based detection with human review, to identify and remove harmful material targeting minors. They should also develop rapid escalation protocols for high-risk content, ensuring timely intervention and demonstrating operational accountability.

c. Increase Transparency and Reporting:

Platforms must maintain auditable compliance documentation, including risk assessments, moderation metrics, and age verification efficacy reports. Transparency strengthens regulatory trust and serves as evidence that platforms are fulfilling their ethical and legal obligations to protect minors.

d. Foster a Culture of Child Safety by Design:

Digital safety should be embedded into platform architecture and corporate governance. Features such as default privacy settings for underage users, parental control tools, and age-appropriate content filters reflect a preventive philosophy consistent with Virkkunen’s stance.

2. Recommendations for Regulators

a. Proactive and Rigorous Oversight:

Regulators should continue systematic, risk-based audits of high-impact platforms, ensuring compliance is maintained and gaps are identified before harm occurs. The EU’s current inquiries exemplify this approach and should serve as a template for ongoing oversight.

b. Harmonization of International Standards:

The EU’s leadership in child protection regulation provides a framework for global alignment, encouraging multinational platforms to adopt standards that exceed minimal domestic requirements. Regulatory cooperation across borders strengthens the universality of protective measures.

c. Enforcement with Proportionality and Accountability:

While fines and sanctions are necessary, regulators should also promote collaborative remediation where platforms demonstrate a willingness to enhance safety measures. Enforcement must balance deterrence with constructive engagement, a principle that Henna Virkkunen underscores in her policy statements.

3. Ethical Imperatives

Beyond legal obligations, the protection of minors online is a moral and societal imperative. The DSA codifies responsibilities that reflect the fundamental rights of children to safety, dignity, and development, principles enshrined in the UN Convention on the Rights of the Child (UNCRC). Platforms have a duty not only to comply with laws but to uphold these ethical standards. Regulatory inaction in the face of systemic harm would constitute a dereliction of societal responsibility, a concern central to Virkkunen’s advocacy.

4. Long-Term Implications

Effective enforcement and compliance will have lasting effects:

- Reduction in exposure to harmful content, protecting psychological development.

- Enhanced corporate accountability, fostering responsible platform design and governance.

- Global precedent, positioning the EU as a model for child protection in the digital age.

- Strengthened trust in digital services, assuring parents and communities that online environments are safer for minors.

To sum up..:

Reinforcing Child Safety Through Regulatory Leadership..

The European Union’s March 2026 investigations into Snapchat and major adult content platforms illustrate a pivotal moment in digital governance, where the rights and welfare of children are elevated to the forefront of regulatory priorities. Through the lens of the Digital Services Act (DSA), this action demonstrates that preventive, enforceable measures are essential to safeguarding minors in increasingly complex online environments.

This paper has examined the legal framework underpinning the DSA, highlighting obligations related to age verification, content moderation, and transparency. It has identified the systemic deficiencies in platform practices that necessitate regulatory intervention and assessed the psychological, ethical, and social risks posed to children by unregulated exposure to adult content. A comparative perspective situates the EU’s approach as both pioneering and influential, providing a global benchmark for platforms and policymakers alike.

Henna Virkkunen’s perspective — emphasizing proactive protection, technological rigor, and ethical accountability — resonates throughout this analysis. By advocating robust, privacy-preserving, and demonstrably effective safeguards, her stance aligns with the principle that child safety is non-negotiable, enforceable, and globally relevant. The EU’s actions against Snapchat and adult content platforms operationalize these principles, transforming abstract rights into tangible regulatory obligations.

”Forward-Looking” perspective;

Moving forward, the following considerations are central to sustaining effective child protection in digital spaces:

- Continuous Improvement of Age Verification Technologies: Platforms must evolve methods to remain resilient against circumvention while respecting privacy and data protection.

- Adaptive Content Moderation: As digital content evolves, moderation strategies must anticipate emerging risks, integrating AI, human oversight, and community reporting.

- International Harmonization: The EU model should inspire cross-border regulatory cooperation, promoting a globally consistent standard for child safety.

- Embedding Ethics into Digital Design: Ethical responsibility should guide platform development, ensuring safety and developmental integrity are integrated into the architecture of online services.

In conclusion, the EU’s regulatory intervention exemplifies a forward-thinking, child-centric approach that harmonizes legal rigor with ethical imperatives. By fully endorsing Henna Virkkunen’s philosophy, this paper asserts that digital safety for minors is a shared societal obligation, and that decisive, transparent, and preventive regulation is the most effective mechanism to protect children in the digital age. The March 2026 inquiries mark a critical step toward a safer, more accountable, and ethically responsible digital ecosystem, setting a precedent for global standards in child protection online.

“Children are our future; therefore, protecting them is the duty of every global citizen.” Attorney Bilge Kaan ÖZKAN

Av. Bilge Kaan ÖZKAN 'ın kaleminden.. sitesinden daha fazla şey keşfedin

Son gönderilerin e-postanıza gönderilmesi için abone olun.